The World as a Surface: Storing Digital Objects in Real-World Landmarks

Description

A wide variety of computing devices now exist that demand very different interaction than what is common with a desktop workstation. For example, mobile phones, gaming devices, domestic technology, ‘couch computing,’ and entertainment systems all have non-standard requirements and opportunities for interacting with information. In particular, selection is different on these devices than on a desktop PC. Both the input and the output aspects of selection are altered: there is generally far less screen space available to show selection items – the user may not even be able to look at the display at all; and the input devices used to make selections must work with the constraints of the device and the environment (e.g., a mobile context makes it difficult to use precision devices like the mouse).

These restrictions on input and output, however, do not mean that the information and selection tasks in these contexts are also less complex: people still may have dozens of contacts, favorite TV shows, applications, or playlists that they need to select from, and the new devices must support these tasks. We need new ways of interacting with information in new contexts, ways that are not strongly oriented towards the full visual presentation and precise movement capabilities of the desktop PC. We are particularl1y interested in techniques that allow fast and broad eyes-free interaction – techniques that reduce the user’s dependence on a visual display, that can be used in a mobile or domestic context, and that can provide fast access to dozens of items.

A few techniques in this space have already been proposed, in which selection is based on audio cues, tapping input, body parts, regions in the space around the user, or small real-world objects. Although these techniques are all useful, they suffer from two problems: they are either relatively slow (e.g., audio cues for selection require linear interaction with a list of items), or they are limited in the number of items they can store (e.g., the number of accessible body parts or body-centric regions is limited). There is therefore a need to have techniques in which a large number of items can be stored, and in which retrieval of any of those items is fast.

We have developed one such technique, called WorldPointing, that meets these goals. WorldPointing is based on the idea of storing digital artifacts in the real-world environment: for example, storing a particular music playlist in a photograph on the mantelpiece. Our idea does not really store data in the real-world object; rather, the digital artifact is mapped to the precise location of the real object. To retrieve a mapped digital artifact, the user simply points at the real-world object and presses a button on their device. Pointing at and selecting the photograph where the user’s playlist is stored, for example, would open that playlist in the device’s music player. WorldPointing is similar to both Virtual Shelves and Body Mnemonics, but dramatically increases both the number of items that could be stored, and the memorability of the mapping, by using objects in the real environment as indexes into the selection set. Not only are there more landmarks in the immediate environment to use as locations for digital content, but the WorldPointing technique is also location-sensitive, meaning that a new set of mappings is available in every new location.

Images and Videos

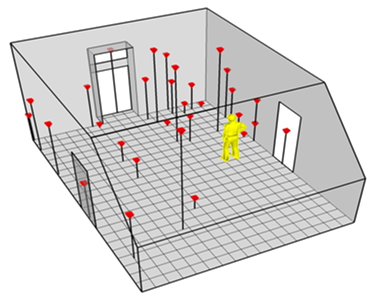

Different locations mapped to digital objects

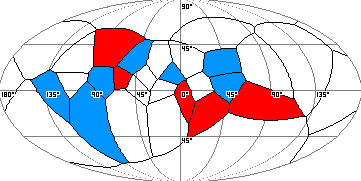

Object map from the user’s forward perspective

Publications

- Reetz, A., Gutwin, C., Cockburn, A., and Mandryk, R., WorldPointing: Improving Eyes-Free Selection by Storing Digital Artifacts in the Real-World Environment, Technical Report HCI-2011-2, University of Saskatchewan.

Andy Cockburn, Philip Quinn, Carl Gutwin, Gonzalo Ramos, Julian Looser: Air pointing: Design and evaluation of spatial target acquisition with and without visual feedback. Int. J. Hum.-Comput. Stud. 69(6): 401-414 (2011)

Partners

CFI – NSERC – SurfNet

|

|

|